Each major AI platform is racing to deploy agents that can act autonomously in healthcare, finance, and identity. None of them have solved the governance problem that determines whether those agents are legally deployable.

The AI industry is treating governance as a post-deployment review. The India AI Impact Summit 2026 demonstrated that governance must be the deployment substrate, not the audit that follows it.

I joined the India AI Impact Summit 2026 as a US industry delegate. I followed the Health, Climate, DPI, Identity, and Governance tracks.

My goals were specific. Stress-test agentic AI against DPI-native rails. Evaluate ABHA's clinical impact.

Assess people-first governance.

Above: Official Accreditation: India AI Impact Summit 2026: US Industry Representative

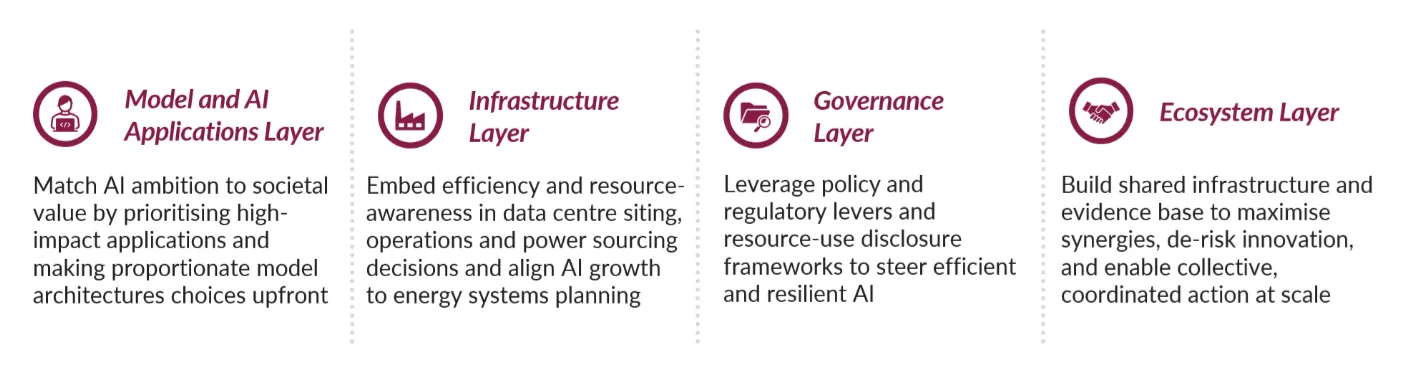

The Global Frameworks for Purposeful AI Innovation

The summit prioritized actionable outcomes over high-level principles through five sectoral frameworks.

- Health: Immediate AI deployment for TB and diabetes retinopathy diagnosis was announced alongside the promotion of 10 cancer care startups.

- Climate: The Resilient AI Challenge was launched for resource-conscious innovation, supported by a new playbook for sustainable AI infrastructure.

- DPI: A $250 billion investment commitment was made for global infrastructure alongside the Charter for Democratic Diffusion of AI to ensure equitable resource access.

- Identity: Pledges from 13 major firms focused on improving multilingual performance and the development of Sovereign AI models that reflect local data.

- Governance: The Summit saw the release of a Guidance Note on AI Governance and corporate commitments to share anonymized usage data for future evidence-based policy.

For a complete catalog of Summit results, see the official IndiaAI Impact Outcome Resources.

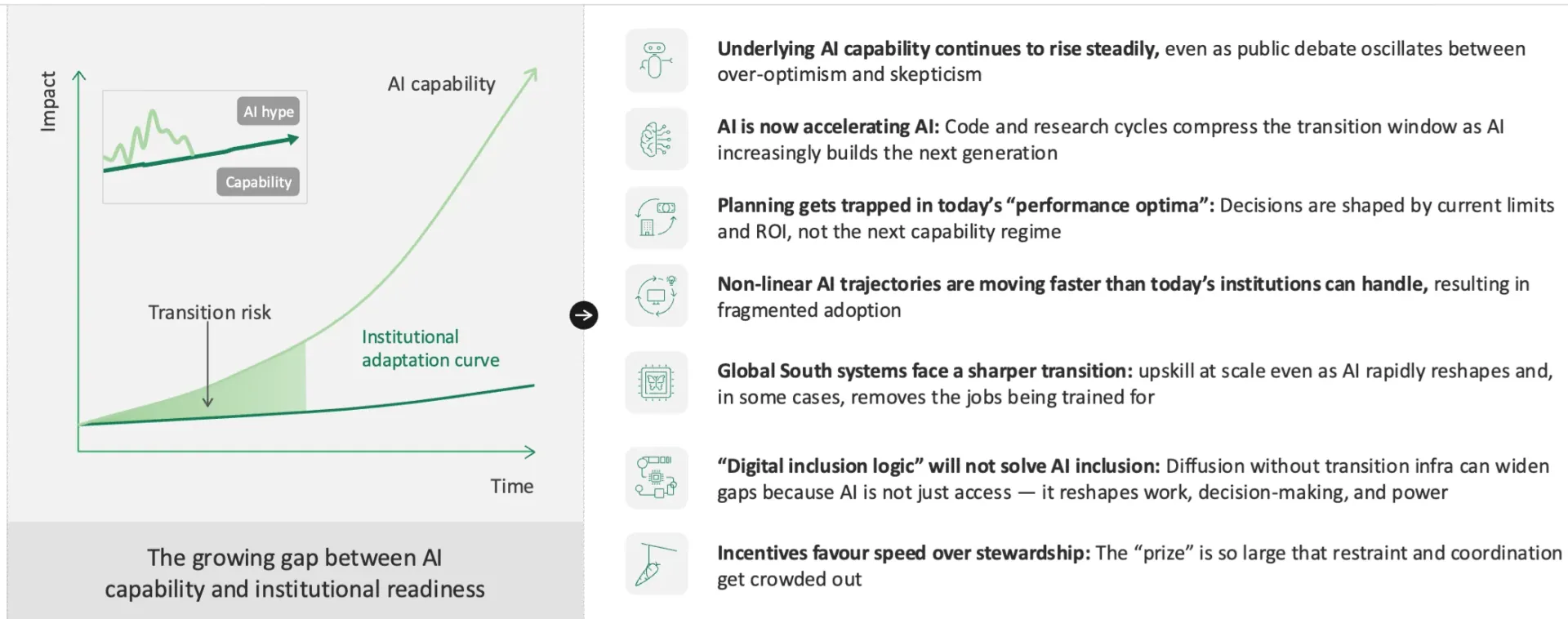

The five signals I observed cut across all tracks. Each one names a pattern in current AI that is failing and shows how DPI-native infrastructure already solves it.

The risk of not moving is specific. Organizations that continue to separate their AI execution layer from their governance layer will face a terminal interoperability failure as DPI-native systems become the global default.

The competitive moat for the next generation of AI is not found in parameter counts or compute density. It is found in architectural liquidity: the ability of data, identity, and trust to move across sovereign boundaries because the infrastructure already carries the constraints.

Why is current AI governance failing at the point of deployment?

The prevailing model of AI governance views compliance like a turnstile that a system must pass through after it is shipped. A system is constructed, a model is sent for review, a compliance “team” checks a “guideline” in the form of a PDF, and several months lapse before a self-audit is cleared.

Keeping your enemies close seems to be a motto for this type of governance. Your enemies in this case are the ruling assumption that governance and execution are two systems that can be separated and kept apart.

In 2026, the India AI Impact Summit demonstrated what happens when that assumption is completely and uncompromisingly eliminated. A governance framework of documents is all that policies and plans for the Indian Aadhaar, the Unified Payments Interface, or Ayushman Bharat Health Account (ABHA) systems are.

They are all working and operating infrastructural executable frameworks where the governance remains in the operational specifications or the “rules of the game” (protocol), not a thing tethered to the aforementioned executable.

The five challenges of AI addressed at the Summit and the five unique components of the Summit (the Framework of Direct Public Intervention (DPI)) that are solving the challenges are the reason for the five signals that are presenting themselves today.

Signal 1: Cost centers constructed around AI identity systems will fail in cross-border operations.

The enemy: identity as an IT procurement decision.

Current AI systems authenticate users through operationally siloed identity providers (IdPs) configured via federated authentication protocols like SAML or OIDC. These are managed by operations teams and measured in license fees.

Each jurisdiction in which the systems are operationally siloed creates a separate identity silo. This leads to a separate identity stack for each company and each acquisition of cross-border mergers and acquisitions (M&A). Not a single identity silo remains to be controlled.

In stark contrast to that, Aadhaar is built on a single premise: 1.3 billion unique identities are verified on the single biometric layer of Aadhaar, not in a per-transaction licensed manner, and are not identity-fragmented across a multitude of services. Any AI system that sits on that layer is guaranteed to have secured and pre-verified identities that do not require any separate identity exchange.

The problem being solved here is recognition of identity as pre-existing infrastructure. It is not something procured on a per-application basis. This builds directly on what the 5 Pillars of Governance Architecture establishes.

We demonstrated it in the Global Identity PaaS: Scaling Governance for 3.5M+ Professionals build, validated by our Case Study on automated governance.

1.3 billion verified users on a single identity rail is not a policy suggestion. It is operational proof that identity-as-infrastructure is superior to identity-as-procurement.

Any AI deployment strategy that disregards this pattern is constructing identity silos that are bound to be incompatible with the forthcoming DPI-native infrastructure.

Signal 2: The Healthcare AI System That Lacks Patient Persistence Is Offloading Liability To The Model

The problem: The patient is an afterthought during retrieval.

This current generation of healthcare AI is shipping RAG pipelines that answer medical queries without knowing who is asking. A 25-year-old athlete and 70-year-old diabetic get the same retrieval set. The model tries to compensate for the absent patient context, and that is where the risk for clinical hallucinations is highest.

I constructed HPPIE to address this issue by integrating persona modeling into retrieval. HPPIE was 2nd of over 300 at Internet Brands Global AI Hackathon. The main idea: If the pipeline is unaware of the patient, the patient will self-filter, exercising the clinical judgment for which they accessed the platform.

At the infrastructural level, The Ayushman Bharat Health Account (ABHA) tackles this problem by assigning a persistent health ID to all citizens. Clinical RAG on ABHA has an assigned persona instead of having to infer one.

If your AI agents do not have a patient identity that is persistent across sessions and across providers, those agents are not being assistive. They are offloading the responsibility of clinical filtering to the patient, and that is a liability.

Signal 3: Climate-health AI built on siloed datasets will miss the population-level signal

The issue: treating climate and health as separate verticals with separate data.

The health impact caused by the degradation of air and water quality, the expansion of disease vectors is compounded by the multiple gaps in our health and environmental data, and the population-wide merging of the clinical and environmental datasets. Most current approaches continue to silo these datasets: one for environmental data, one for epidemiological data, one for clinical data, and one for patient records systems, with no unified record for patients crossing several systems.

The emerging pattern at the summit is the potential of DPI-native health stacks to link patient-identified environmental exposure data via a health record verification system, enabling the patient to be the same individual linked to the air quality and water contamination data at the district level.

This illustrates the operational relevance of the Neuro-Prediction Pivot. To enable pre-emptive predictive health monitoring by forecasting an impending seizure, respiratory crisis, or similar, a data infrastructure that is integrated and fused is essential.

While population-level data infrastructure is essential for deploying preemptive health AI as a system, the absence of this data makes it a theoretical construct. This is the space in which I have not built.

The pattern I recognized: the data fusion problem is an identity problem. The identity problem is an infrastructure problem.

Signal 4: Agentic AI being deployed without pre-execution constraints means it cannot be governed in certain environments

The bottleneck: governance as post-deployment audits versus pre-execution constraints.

The current model of managing AI agents in production is centered around post hoc monitoring. The agent takes action, a log of the action is created, and some review board looks at the log weeks later.

Governance latency, the period from when an agent makes a decision to the moment a constraint is enforced, is days and weeks.

Ethical Hyper-Velocity (EHV) standardizes an alternative: pre-execution constraints whereby non-compliant actions are computationally unreachable. The governance latency approaches zero. The DPI-native pattern proves it at the level of infrastructure: agents operating within hyper-velocity governance are governed by the DPI layer as constraints that they cannot bypass.

If your AI agents in sensitive contexts such as M&A or clinical healthcare do not have pre-execution audit trails, cryptographic logs, and domain-specific explainable components mapped to the regulatory jurisdictions they operate in, your agents are un-deployable where post-incident accountability is required by law. The gap between "deployed" and "legally defensible" is only governance latency. This is something that must be closed at the architectural level, not the compliance level.

Signal 5: An AI solution constructed with enterprise customers in mind will be inaccessible at scale

The challenge: "people-first AI" as a primary engineering specification.

Current iteration AI systems are often built for audiences with constant smartphone access, English fluency, and high digital literacy. When these systems are deployed where these conditions are not the default, the architectural gaps become visible across the global stack.

Remove any explanatory text aimed at novices to filter out unnecessary cognitive load. Establish a system to quantitatively track the ROI of friction reduction (Pillar 3). Design for the most constrained user from the outset, or your architecture will be fundamentally incompatible with the opportunities that a DPI-native infrastructure will enable.

This shift positions APAC and the Global South not just as a consumer market, but as the global AI operating region where the rules of scale and trust are presently being defined.

What serious builders should do next

The move from "PDF Governance" to "Executable Infrastructure" requires a fundamental shift in how systems are built.

- For identity/M&A architects: Start aligning architectures to DPI-native models today. Design for cross-border data flows and governance that is compiled into the protocol, not added as a middleware.

- For health/climate-health AI teams: Focus on deployable, governance-aware systems in the Global South, not just isolated models. Pre-verified patient persistence is the only clinical moat that matters.

- For governance/AI policy leaders: Collaborate with practitioners on agentic AI frameworks that can directly execute policy. Stop drafting high-level principles and start defining the protocol-level constraints.

I am building autonomous identity and clinical patient engines that compile governance directly into the AI stack. I am open to collaborating with researchers, policy innovators, and startups in healthcare, DPI, and M&A/identity who are building for this architectural shift.

What do these five signals indicate?

DPI-native rails are rapidly defining the global operating system for AI. This transition moves governance from high-level policy into the foundational infrastructure layer.

Platforms unable to achieve interoperability with these sovereign stacks are not merely losing market share. They are reaching a terminal liquidity gap. They cannot flow data, identity, and trust across the emerging boundaries.

Governance should not be treated as an auditing layer for AI. Build it as the foundational layer that AI operates on.

Architectural Friction Point

The model fails when DPI-native systems (like ABHA or UPI) are interfaced with legacy 'walled garden' enterprise databases. The impedance mismatch between open-protocol liquidity and proprietary API rate-limiting creates a governance chokepoint that cannot be resolved through AI alone.

Cite This Work

Sharma, Riddhi Mohan. (2026). India AI Impact: 5 Signals Setting the New Global Architecture Standard. riddhimohan.com, February 26, 2026.

Version History

- v1.1 (February 26, 2026): Add architectural liquidity updates, and official delegate accreditation.

- v1.0 (February 26, 2026): Initial expert perspective published. Field observations from India AI Impact Summit 2026 (US industry delegate).

Cite This Work

Formal Academic Reference

"Sharma, Riddhi Mohan. (2026). India AI Impact: 5 Signals Setting the New Global Architecture Standard. riddhimohan.com, February 26, 2026. /blog/india-ai-impact-5-signals-setting-new-global-architecture-standard"

This research is open for academic citation and peer-review. Established to support the advancement of AI Governance and Industrial Ethics.

Related Insights

Architecture Is Policy: Compiling Governance into the AI Stack

Building this portfolio offered a live use-case of Ethical Hyper-Velocity. The focus is on a three-tier governance architecture that manages the automation of pre-build guardrails pertaining to consistent, reliable standards, performance budgets, and the professional integrity of the builders.

Ethical Hyper-Velocity (EHV): Compiling Governance into the AI Inference Stack

EHV is not a 'policy framework' but a Governance-Aware JIT Compiler that eliminates the 'Governance Latency' inherent in ISO 42001 and human-in-the-loop audits. By compiling governance directly into the inference stack, we move from reactive compliance to proactive, sub-millisecond enforcement.

HPPIE: RAG Without Persona Modeling Fails Patient Clinical Relevance

A RAG pipeline that returns the same results for a 25-year-old athlete and a 70-year-old with a diabetic condition has not solved relevance. It has transferred the burden of clinical filtering to the patient. HPPIE fuses persona modeling directly into retrieval to close that gap.

Identity Debt Compounds: What 12 Healthcare Acquisitions Taught Me About Day One

Identity integration starts post-close. That is not the problem. The problem is whether the platform was built for serial acquisition before the first deal closed.

Riddhi Mohan Sharma

Engineering Leader. Global Identity Architecture. M&A Technology Integration. AI Strategy.

Engineering Leader specializing in Global Digital Identity Architecture and M&A Technology Integration. Track record across multi-million dollar P&L, AI strategy, healthcare compliance (GDPR/HIPAA), and Identity platforms scaled to 3.5M+ users.

Framework Attribution

Disclaimer:The views, frameworks, and architectures presented here (including Architecture Is Policy / Ethical Hyper-Velocity and HPPIE) are my personal thoughts and original syntheses. They are inspired by and draw lessons from my broad enterprise-scale research and experience in healthcare identity, M&A integration, and AI governance. They do not represent the views, policies, or practices of my employer and are not based on any specific proprietary information, internal systems, code, metrics, or confidential details from my current or past roles. All examples and implementations are generalized or self-hosted on this personal site.