This post describes the three-tier governance frameworks that use automated pre-build guardrails. These ensure the highest standards of fidelity and the utmost integrity of professionalism.

Typically, professional portfolios are static snapshots that are brittle and are like dioramas, eventually getting buried under a slow accumulation of technical debt.

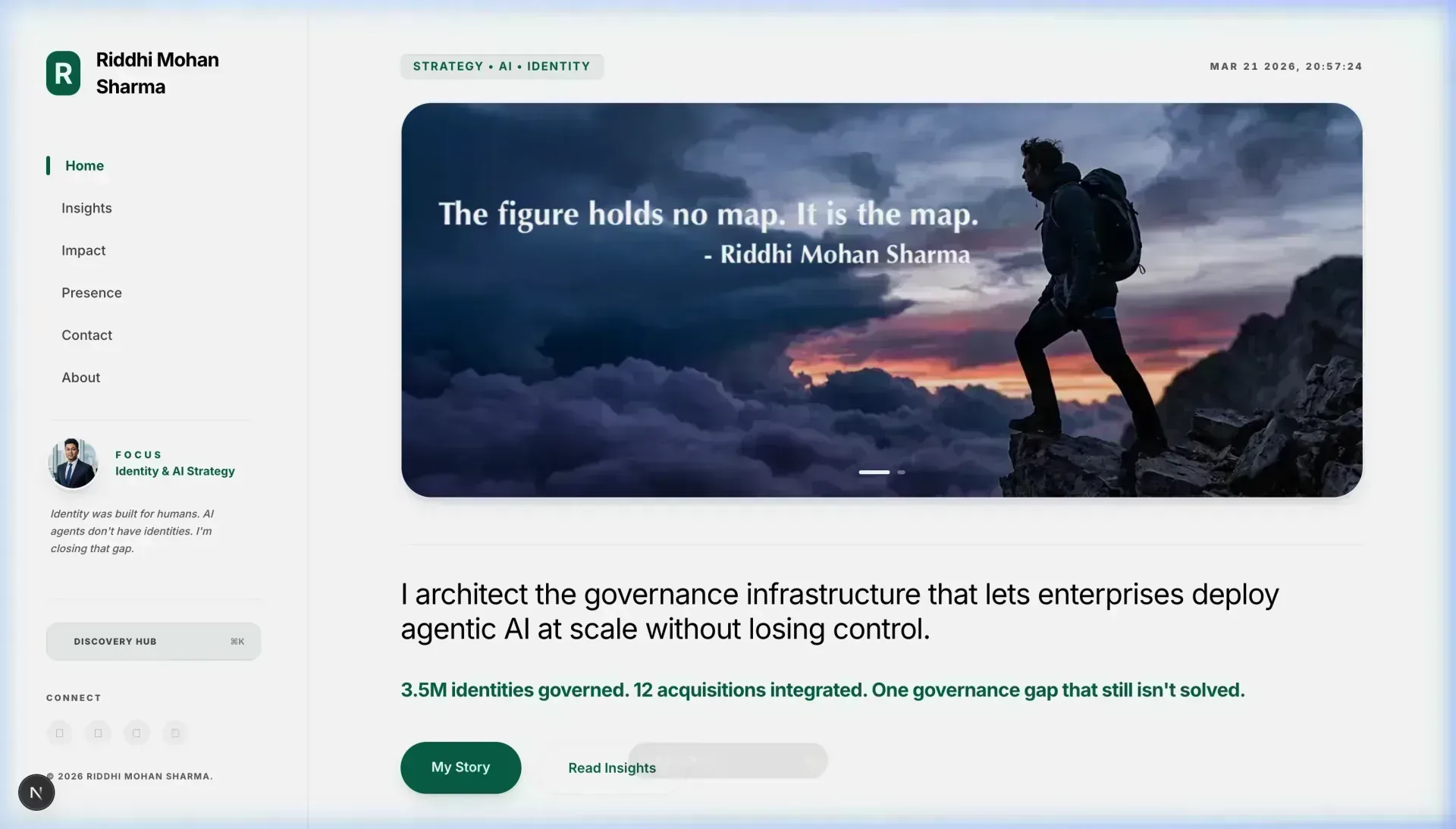

When I rebuilt Riddhimohan.com, I refused to take the easy route. I considered the site as a living piece of infrastructure, a representative use-case of the enterprise governance architectures I design for AI agents.

The mission was Ethical Hyper-Velocity. It may sound like a contradiction, but it isn’t. It means scaling a professional presence while preserving the structural integrity of a $10B enterprise.

Above: RiddhiMohan.com as viewed on Mobile and Desktop devices, demonstrating visual stability and high-fidelity typography.

Why does manual governance fail at scale?

In contemporary systems, governance is a deployment assurance, not a human review issue. That distinction is significant. On this site, each deployment is subjected to an automated audit by custom Automated Governance guardrails.

The content guard enforces professional claims with the same consistency as a bank for transactional services. It doesn’t just “recommend” consistency; it will block the pipeline if a legacy title tries to go to production.

It treats metadata as a lex contract.

The challenge in the enterprise is not building Agentic AI. The challenge is governing it at a scale where the velocity exceeds the human audit capacity.

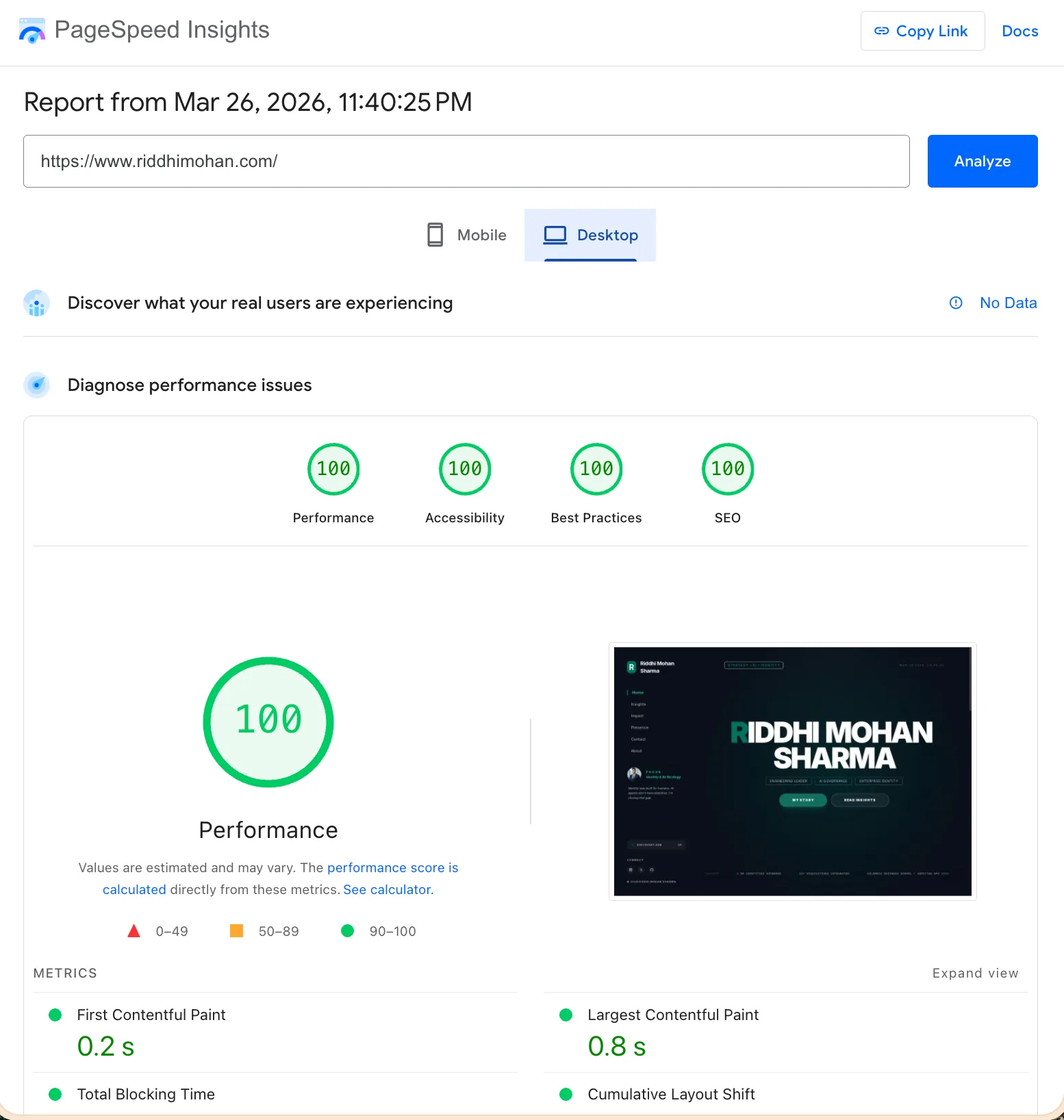

Each build on this site is benchmarked to Google’s PageSpeed Insights. The Desktop Performance score is unaccidentally 100/100 at build time.

What is the role of automated guardrails in ensuring integrity?

Here we shift the "culture" of neglect to standards automation. If the governance fails, the deployment fails. Simple as that.

Automated guardrails is a space Engineering Leaders will need to take ownership of. This is not something that can be passed off to Marketing.

Engineering Leaders need to own the automated guardrail roadmap personally. This cannot be pushed to marketing. This model extends directly from my work on Global Identity PaaS.

If Performance could be reduced to a number, it would be a con. A slow site is a broken brand.

In this architecture, a CWV guard will always be there to protect the site.

From Agentic Enforcement to Passive Guardrails

Phase 1 of the three-tier architecture shown above consists of structural guardrails. EHV becomes operationally complete in Phase 2, where CWV Metrics are treated as laws of physics enforced by an AI agent with remediation authority instead of as metrics to monitor.

The distinction is important. A conventional guardrail alerts. An agentic guardrail steps in.

The AI agent in this framework does more than just identify a slow Largest Contentful Paint (LCP). It finds the offending code change, isolates the architectural root cause, and creates a fix.

Deployment is prevented until the fix is executed, typically by the agent itself.

To enact Governance and Guardrails for CWV Metrics deploying AI agents as 'laws of physics,’ we step from just periodic watching, into Active Enforcement and Automated Remediation.

Core Technical Structural Build

This build is built upon three distinct Agentic Roles:

| Role | Responsibility | Technical Tooling |

|---|---|---|

| The Observer | Continuous runtime & build-time performance auditing. | Lighthouse CI, metrics library, Puppeteer |

| The Lawmaker | Defines non-negotiable thresholds (The "Physical Laws") | cwv-guard.mjs, budget.json. |

| The Remediator | (The AI Agent) Analyzes regressions and applies fixes. | LLM-driven Diff Analysis, Image Tuning API, Next/Image automation. |

1. The 'Physical Law' Layer (Pre-Commit/Pre-Push)

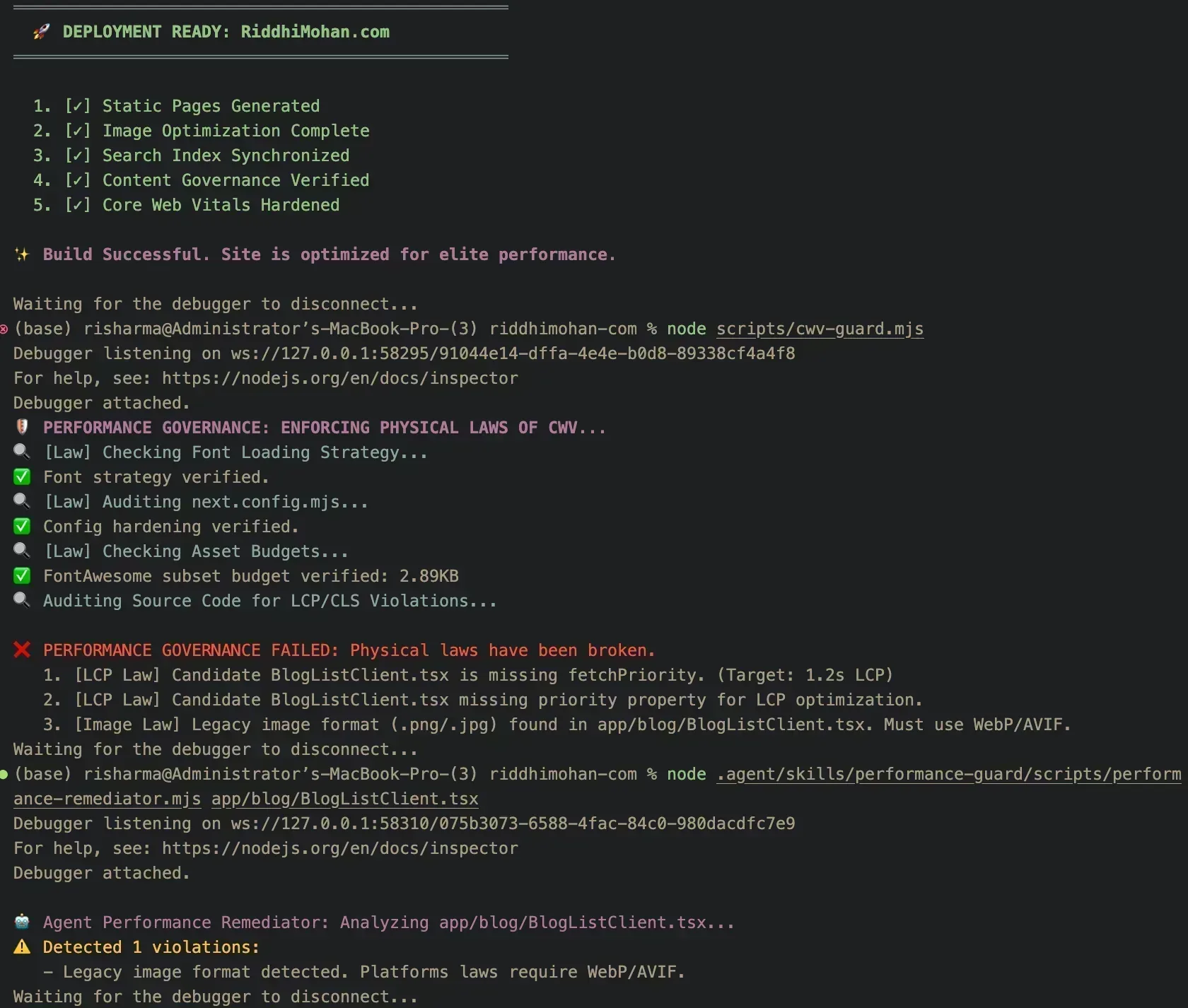

The architecture strengthens the scripts/cwv-guard.mjs to interact with a Budget Policy. It does more than just look for 'legacy colors' anymore; it performs a gated check for real performance metrics.

Shadow Build Mechanism: A GitHub Action or Git Hook that initiates a 'Shadow Build.'

Enforcement: If during the shadow build the LCP is above 1.2s or CLS > 0.1, the build is marked as a 'Hard Fail'.

2. The Agentic Remediation Loop

The AI Agent gets invoked whenever a 'Physical Law' gets broken.

Context Injection: These agents obtain the Lighthouse JSON report & Current Git Diff.

Root Cause Analysis: The agents clarify which change caused the issue (e.g. “The new hero image in HomeClient.tsx lacks fetchPriority”).

The ‘Correction’ Ghostwriter: The agent creates a Remediation Diff.

Example: In an Image component, it might add priority={true}, or suggest changing a .png to .webp.

3. Agentic Workflow Integration

Step 1: Developer pushes code.

Step 2: Observer Agent performs a headless audit for the code's performance.

Step 3: The audit captures a regression (e.g. CLS due to a new ad banner).

Step 4: Remediator Agent evaluates the React component, detects the absence of height/width attributes, and produces a fix.

Step 5: The developer receives a comment, “Governance Error: CLS Law Violated. [View Remediation Diff]”, and the PR gets blocked.

What are the technical implications?

If a font subset exceeds a single kilobyte past the limit, the site shuts down. Each line of code is checked for outdated color and accessibility compliance. Hashing collisions are avoided by preloading all assets with absolute path referencing.

Above: The Automated Governance build hard-blocked if any LCP candidate lacks efficiency or if legacy image formats are detected.

This site taught me a lesson for the Agentic AI infrastructure. If you want to draft a policy and communicate it via PDF, it will be ignored. If you want to draft a policy, and build it into the deployment pipeline, it will be enforced.

Trust and speed are not trade-offs. They are twins. You can only go fast when you can trust the brakes.

Verification & Build Readiness

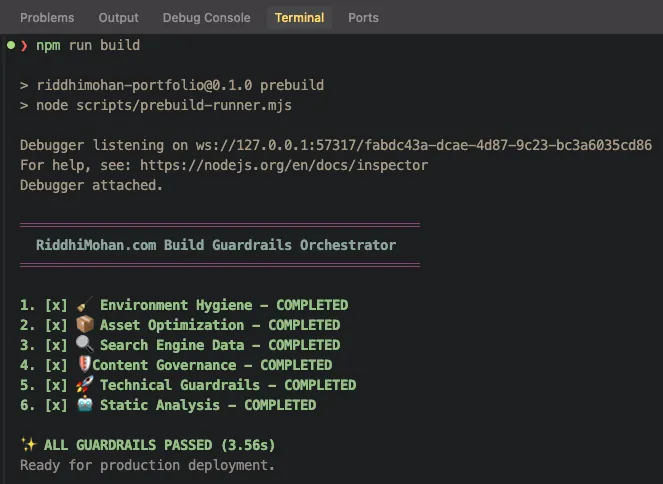

The following live captures from the automated deployment pipeline illustrate the results of this governance architecture. The site can only be deployed to production when each guardrail is green.

Above: The build summary shows that all static pages that go to production have been made performance and professionally sound.

Performance Verification

The effectiveness of governance is illustrated through the use of real time metrics.

Desktop Performance (100/100)

Perfect lighthouse score (100/100) achieves almost instantaneous visual stability alongside elite performance.

Above: PageSpeed Insights Desktop Report showing 100/100 scores across all categories.

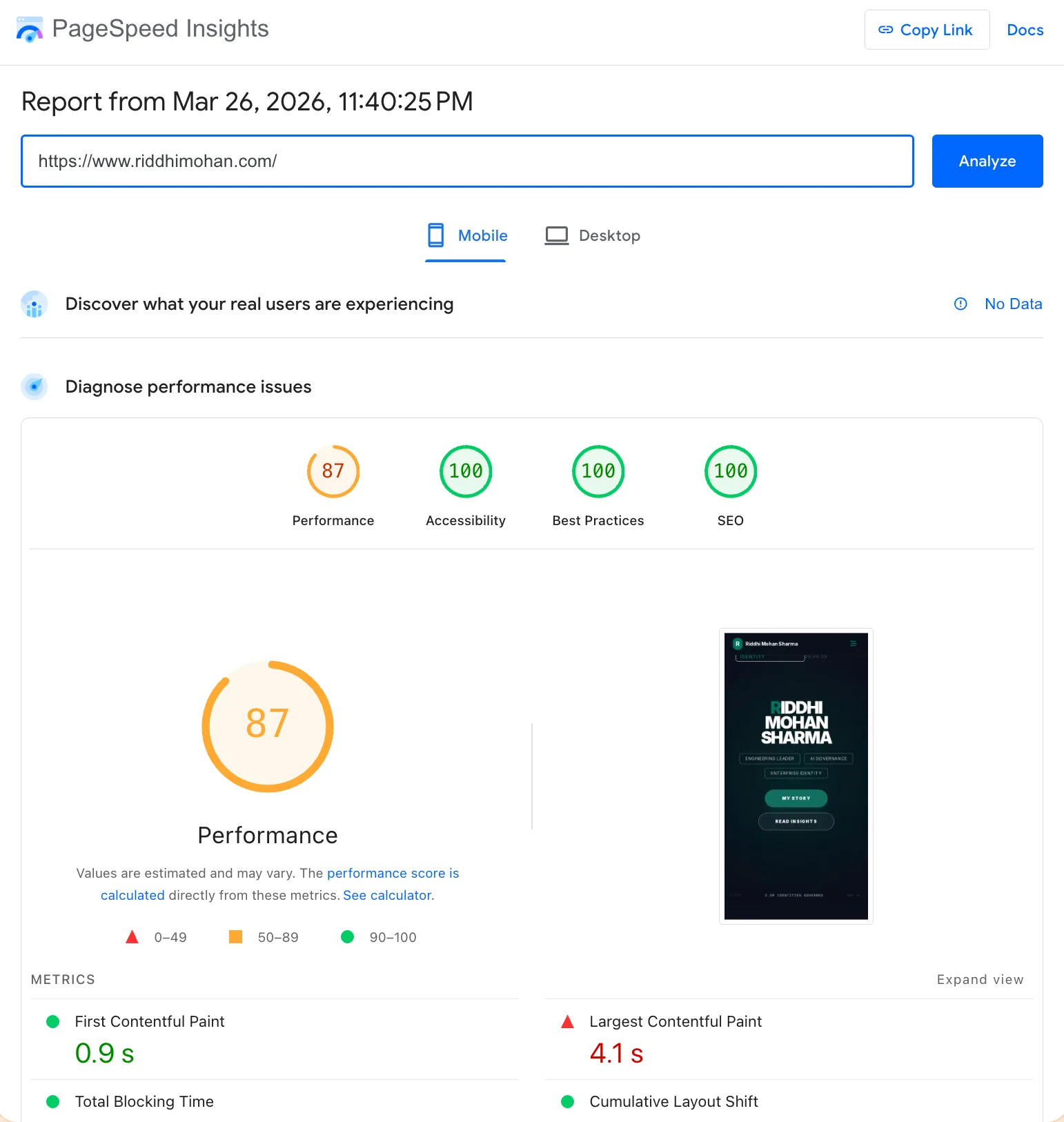

Mobile Performance (87/100)

Performance (87/100) / Accessibility (100/100) / SEO (100/100) / Best Practices (100/100)

Mobile tuning puts the utmost focus on the clarity of the brand. To achieve zero layout shift, the architecture utilizes full-fidelity font preloading instead of low-bandwidth font simulations. It comes at a cost.

Above: PageSpeed Insights Mobile Report demonstrating high-performance and accessibility metrics.

Technical Index

- Case Reference: RMS-2026-AEG

- Framework: Ethical Hyper-Velocity Governance

- Methodology: Technical Guardrails, Automated Governance & Agentic Remediation

- Archival Priority: Live Execution (March 21, 2026)

- Status: Case Verified

Case Study History

- v1.0 (March 21, 2026): Case Study published. Live build results from Riddhimohan.com deployment.

- v1.1 (March 26, 2026): Updated core technical build, live build results from Riddhimohan.com deployment.

Questions this work raises

- Scalability of Agentic Remediation: How does the compute overhead of LLM-driven diff analysis scale when managing thousands of microservices?

- Governance Latency: Does the "Hard Fail" model introduce unacceptable friction in high-velocity CI/CD environments?

- False Positives: How do we prevent agentic lawmakers from blocking valid architectural innovations that temporarily break legacy performance budgets?

Technical Friction Points

The approach does not hold when managing legacy monolithic architectures that lack the modularity required for agentic remediation. In such systems, the 'Physical Law' layer often triggers cascade failures that the current remediator cannot isolate without risking production stability.

This build is operating on Next.js 16 using turbopack for high velocity compilation. The styling is done using custom CSS with accessibility guards.

The governance engine including the agentic remediation loop powered by custom Node.js scripts and LLM-driven diff analysis.

All of the governance engine is custom Node.js scripts that modifies the distribution to be a static export. This is a live use case.

The guardrails will be moving with the Identity Architecture.

Quit speaking about standards and start automating them. Then teach the automation to fix itself.

Related Insights

Ethical Hyper-Velocity (EHV): Compiling Governance into the AI Inference Stack

EHV is not a 'policy framework' but a Governance-Aware JIT Compiler that eliminates the 'Governance Latency' inherent in ISO 42001 and human-in-the-loop audits. By compiling governance directly into the inference stack, we move from reactive compliance to proactive, sub-millisecond enforcement.

Identity Debt Compounds: What 12 Healthcare Acquisitions Taught Me About Day One

Identity integration starts post-close. That is not the problem. The problem is whether the platform was built for serial acquisition before the first deal closed.

HPPIE: RAG Without Persona Modeling Fails Patient Clinical Relevance

A RAG pipeline that returns the same results for a 25-year-old athlete and a 70-year-old with a diabetic condition has not solved relevance. It has transferred the burden of clinical filtering to the patient. HPPIE fuses persona modeling directly into retrieval to close that gap.

Neuro-Prediction Pivot: Why AI-to-Noise Beats Surgical Scale

The next era of neurotechnology is not about surgical restoration, but preemptive prediction. Non-invasive BCIs, powered by deep learning, offer a safe, scalable pathway to a ~$6B market, shifting the focus from treating paralysis to forecasting and managing neurological and mental health conditions.

Riddhi Mohan Sharma

Engineering Leader. Global Identity Architecture. M&A Technology Integration. AI Strategy.

Engineering Leader specializing in Global Digital Identity Architecture and M&A Technology Integration. Track record across multi-million dollar P&L, AI strategy, healthcare compliance (GDPR/HIPAA), and Identity platforms scaled to 3.5M+ users.

Framework Attribution

Disclaimer:The views, frameworks, and architectures presented here (including Architecture Is Policy / Ethical Hyper-Velocity and HPPIE) are my personal thoughts and original syntheses. They are inspired by and draw lessons from my broad enterprise-scale research and experience in healthcare identity, M&A integration, and AI governance. They do not represent the views, policies, or practices of my employer and are not based on any specific proprietary information, internal systems, code, metrics, or confidential details from my current or past roles. All examples and implementations are generalized or self-hosted on this personal site.